Priyadarshini Kumari

I work at Apple, focusing on the intersection of machine learning and health.

Previously at Sony AI, I contributed to a range of projects, from developing data-efficient machine learning techniques for graph neural networks to creating multimodal perception models integrating text, and olfactory inputs. My work spanned applications in biomedical research, olfaction, and gastronomy.

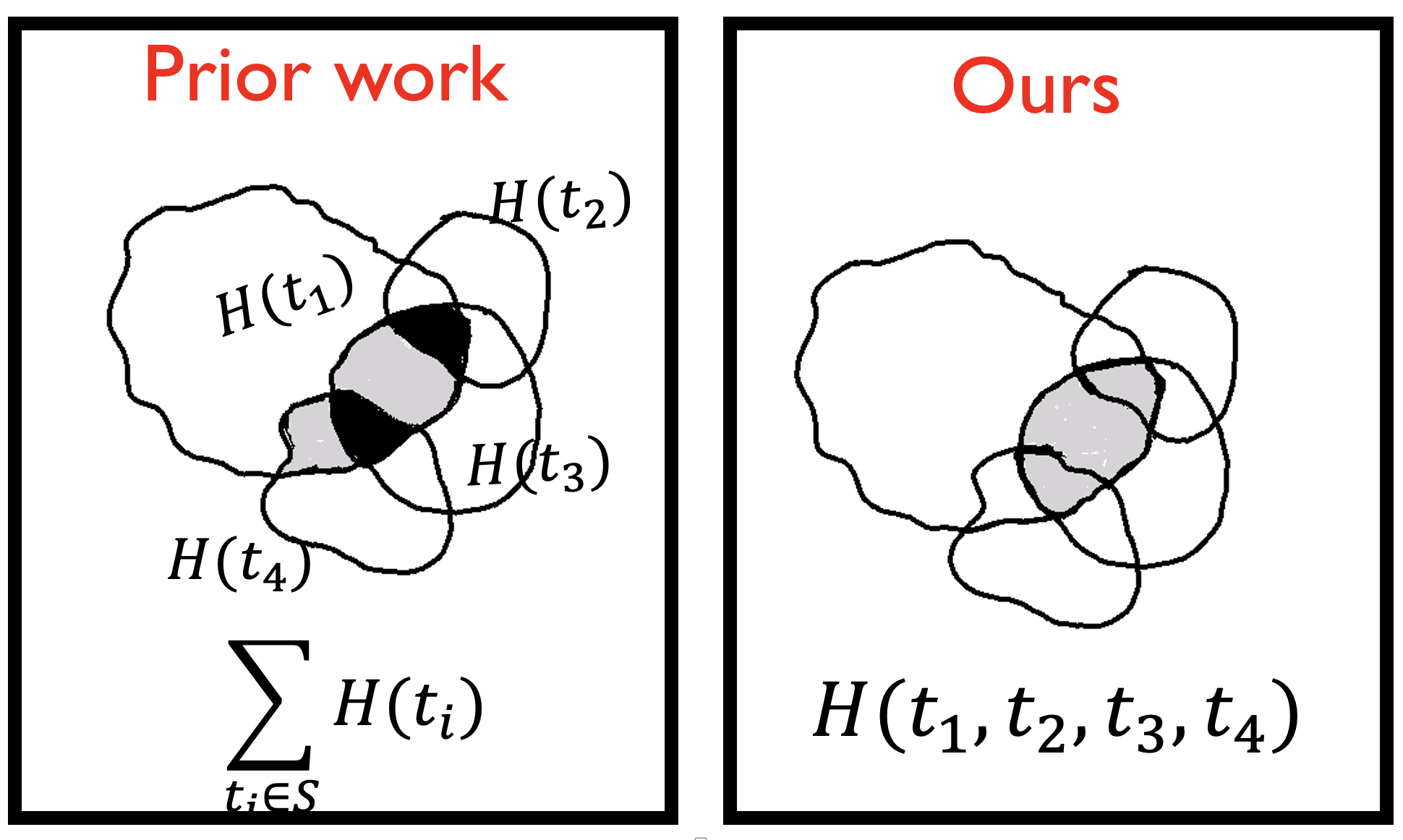

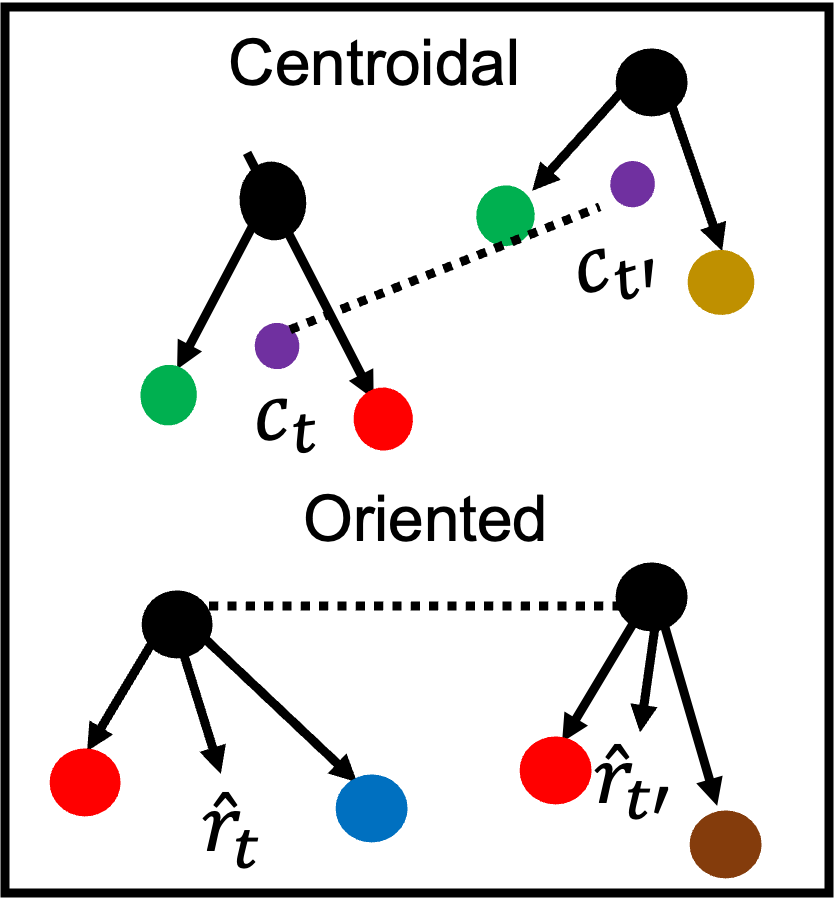

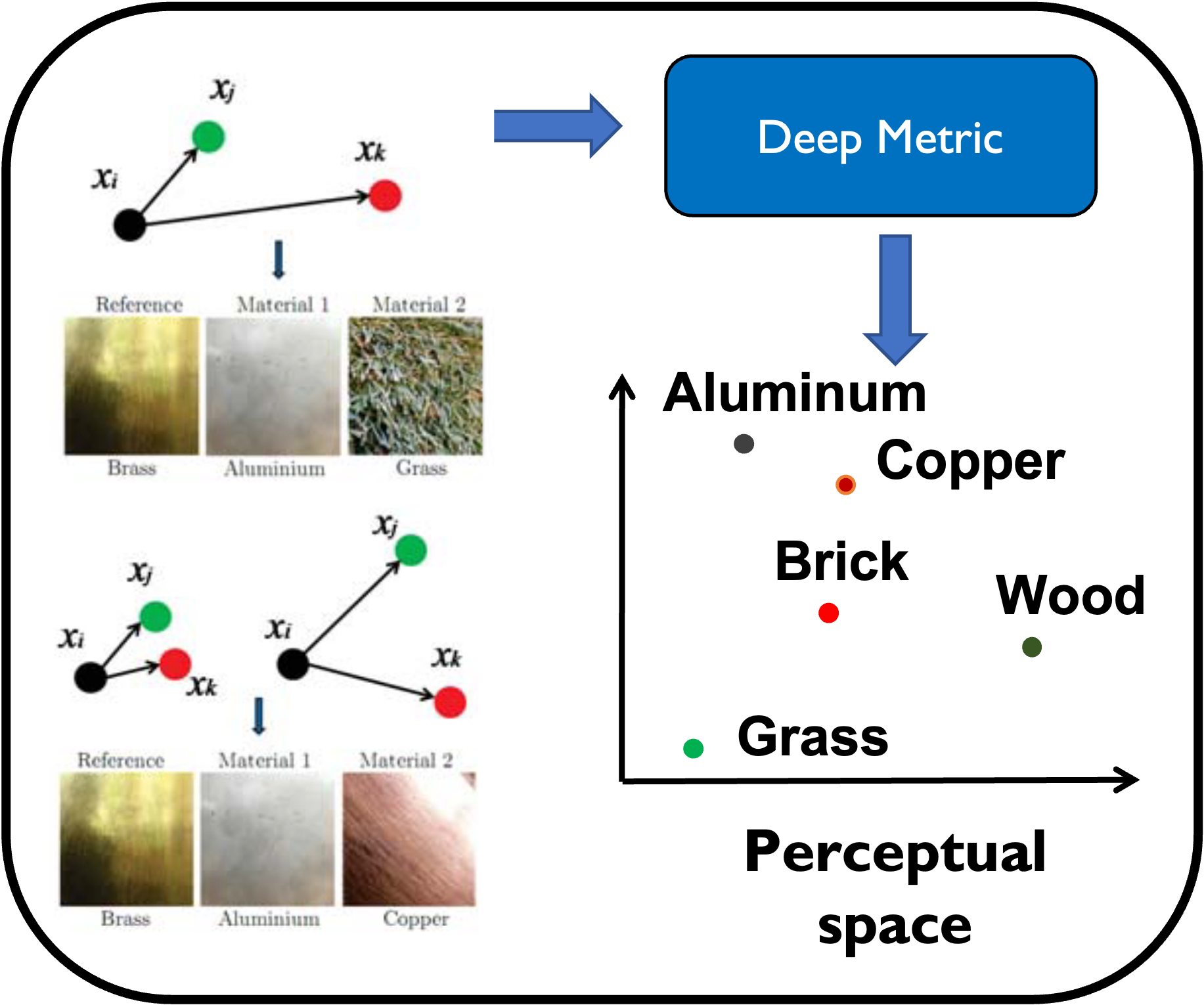

I received my Ph.D. from IIT Bombay, advised by Prof. Subhasis Chaudhuri and Prof. Siddhartha Chaudhuri. My thesis was on Label-Efficient Distance Metric Learning. Before that, I completed my master’s also from IIT Bombay where I developed multimodal rendering techniques that combined haptic, visual, and auditory feedback to make 3D models of heritage sites accessible to the visually impaired.

News

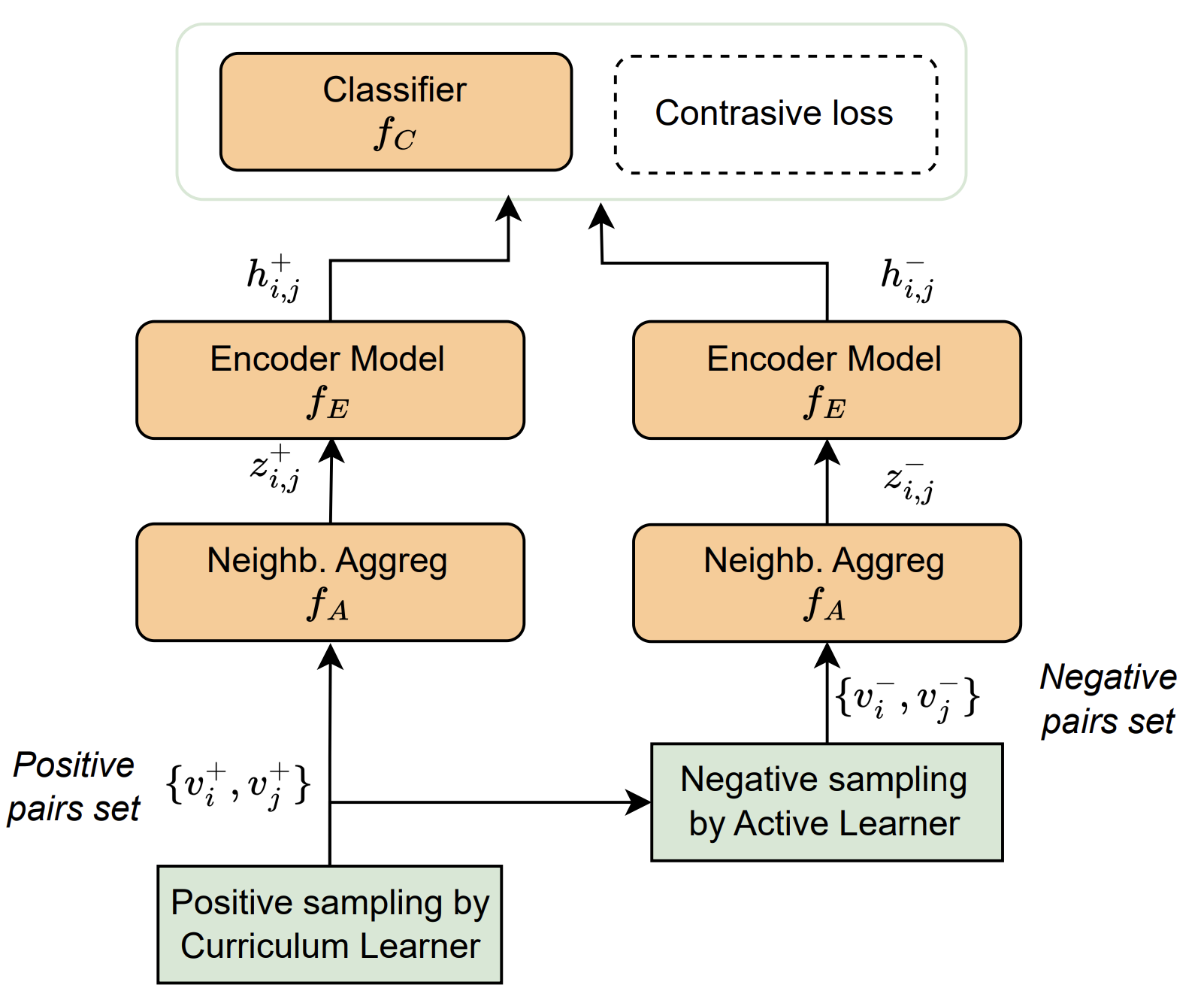

| Jul 25, 2024 | Our paper “Link prediction for hypothesis generation: an active curriculum learning infused temporal graph-based approach” is accepted at Artificial Intelligence Review 2024. |

|---|---|

| Jul 12, 2024 | Our paper “CosFairNet:A Parameter-Space based Approach for Bias Free Learning” is accepted at BMVC 2024. |

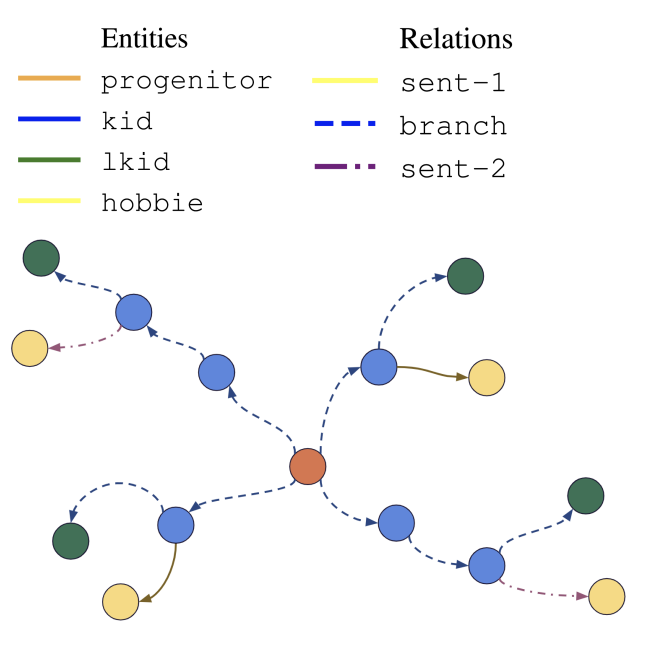

| Oct 31, 2023 | Our paper “FRUNI and FTREE synthetic knowledge graphs for evaluating explainability” is accepted at NeurIPS XAIA workshop 2023. |

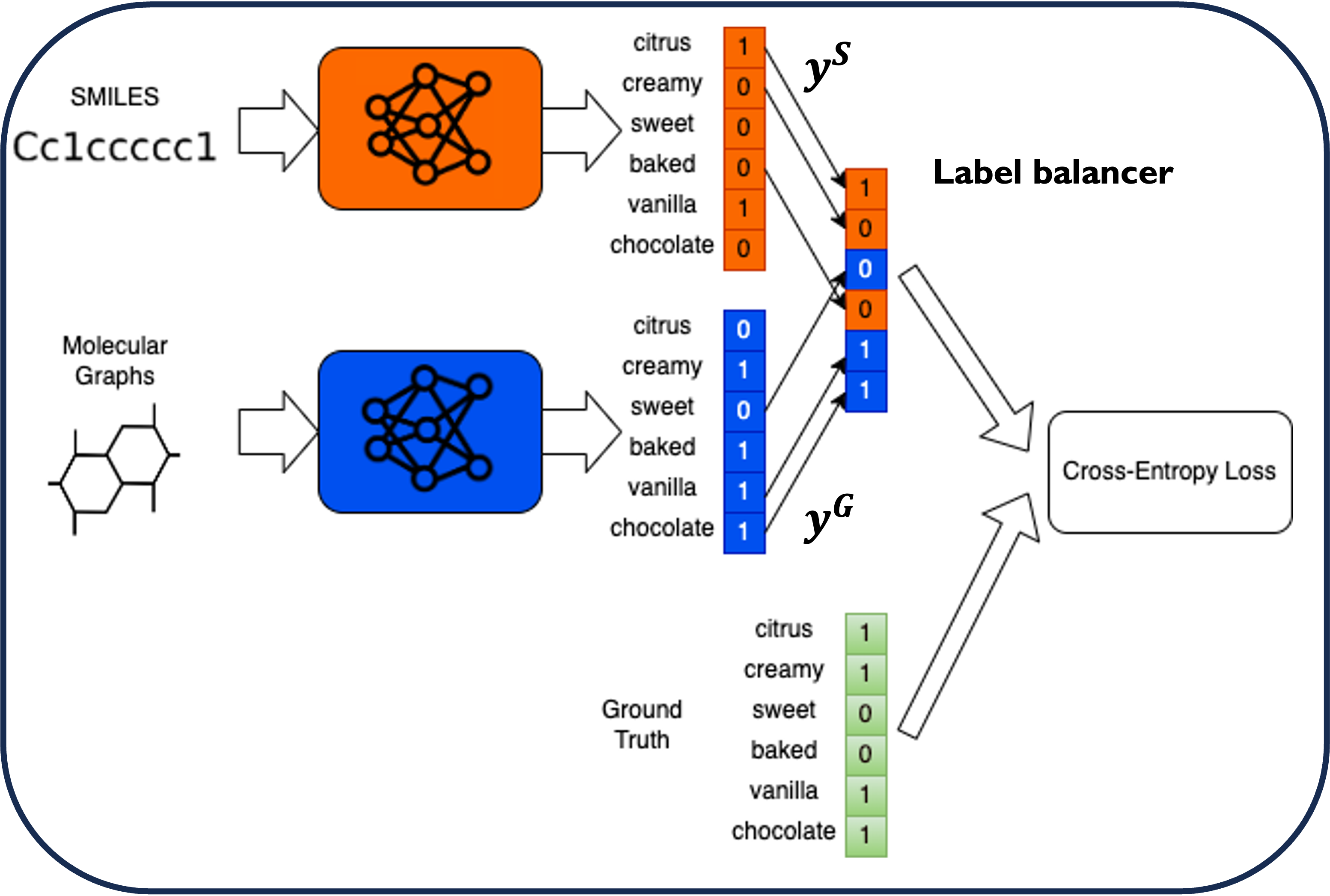

| Sep 21, 2023 | Two papers “Perceptual metrics for odorants: learning from non-expert similarity feedback using machine learning” and “Comparing molecular representations, e-nose signals, and other featurization, for learning to smell aroma molecules” are accepted at PLOS One |

| Jul 15, 2023 | Our paper “Optimizing Learning Across Multimodal Transfer Features for Modeling Olfactory Perception” was accepted to Multimodal SIGKDD 2023 |

| Jul 12, 2023 | I gave a talk on “Using the dynamics of discovery: A temporal graph-based approach to automated hypothesis generationat” at 3rd Nobel Turing Challenge Initiative Workshop |

| Apr 28, 2023 | I will serve as senior program chair for WiML un-workshop @ ICML 2023 |

| Aug 26, 2022 | I will serve as an area chair for WiML workshop @ NeurIPS 2022 |

| Mar 21, 2022 | Presented our paper at IEEE Haptics Symposium 2022 |

| Sep 01, 2021 | Joined Sony Research as a Research Scientist |

| Aug 23, 2021 | Presented our paper at ECML-PKDD 2021 |

| Jul 17, 2021 | Defended my PhD thesis |

| Jan 15, 2021 | Presented our paper at IJCAI 2021 |